Calibrated Autonomy

Building autonomous systems that operators can trust in contested environments.

56

Active Projects in Calibrated Autonomy

The Focus

Autonomous systems deployed in degraded, denied, and chaotic environments must satisfy three properties simultaneously: they perform against mission objectives within their operational design domain, degrade gracefully when conditions shift outside it, and detect when they are operating beyond their competence boundary. Critically, these ODDs cannot be fully pre-specified — real operational environments are open-world, unstructured, and unpredictable. We believe systems must reason over open vocabularies and ingest unstructured data, not just perform within tightly enumerated conditions.

We are interested in frameworks that characterize and guarantee these properties from the subsystem to the system level. Performance and robustness make a system capable. Calibration makes it supervisable. Together, they are what make 1:N human command of autonomous systems possible.

Core Objectives

Contact UsSeeing and sensing when satellites disappear

Fusing onboard perception to maintain precision in hostile signal environments

Autonomous aircraft cannot depend on GPS as a permanent crutch. In contested theaters, navigation systems must function under jamming, spoofing, and total denial. Visual-inertial navigation addresses this by tightly coupling camera-based perception with inertial measurement, building a continuously updated estimate of position and motion using onboard sensing alone. Instead of treating GPS as a primary reference, it becomes just one optional input among many — a convenience, not a requirement.

The technical challenge is maintaining long-term accuracy while operating under dynamic lighting, terrain ambiguity, vibration, and aggressive maneuvering. Robust navigation requires algorithms that reconcile noisy visual features with high-rate inertial data in real time, correcting drift without introducing instability. The system must remain computationally efficient, resilient to occlusion and adversarial conditions, and reliable across radically different environments — ocean, desert, urban terrain, and low-visibility weather — all without external infrastructure.

Turning commander intent into machine action

Bridging human language and autonomous mission logic

Operators communicate goals in terms of intent, priorities, and constraints — not in code. Translating that intent into executable behavior requires systems that can interpret ambiguous human language and map it to structured mission plans. The challenge is not simply understanding words, but capturing operational meaning: risk tolerance, sequencing, conditional objectives, and rules of engagement must survive the translation intact.

This demands architectures that combine language understanding with formal planning frameworks. The autonomy stack must transform high-level directives into verifiable action trees that adapt as conditions evolve. Misinterpretation is unacceptable; ambiguity must trigger clarification rather than silent failure. The system acts as an intelligent intermediary, ensuring that human strategy is faithfully represented in machine execution while preserving transparency and operator authority.

Swarms that think together without a single point of failure

Distributed intelligence for collaborative autonomy

Multi-vehicle operations cannot depend on a fragile central controller. In contested environments, communications degrade, nodes fail, and adversaries actively disrupt coordination. Decentralized planning distributes decision-making across agents so that the system remains functional even when partially disconnected. Each vehicle maintains local awareness while contributing to a shared mission objective.

The challenge lies in balancing independence with cooperation. Agents must negotiate task allocation, avoid conflicts, share intent, and replan dynamically without saturating limited bandwidth. Coordination algorithms must scale from small teams to large swarms while remaining predictable and verifiable. The result is a collective system that behaves coherently under pressure — resilient not because it avoids disruption, but because it is designed to absorb it.

Autonomy that degrades gracefully instead of collapsing

Maintaining mission capability under active opposition

Contested environments introduce uncertainty at every layer: sensing is corrupted, communications are intermittent, and adversaries adapt in real time. Resilient autonomy requires systems that anticipate degradation and continue operating within safe and effective bounds. Rather than assuming ideal conditions, the architecture must be built around failure tolerance and recovery.

This involves layered redundancy, adaptive planning, and self-monitoring behavior that detects when confidence drops below acceptable thresholds. The system must recognize when to continue autonomously, when to request human intervention, and when to fall back to conservative strategies. Resilience is not a single feature; it is a design philosophy that treats uncertainty as inevitable and prepares the autonomy stack to operate through it.

Keeping humans in command while machines carry the load

Designing collaboration instead of replacement

Autonomy succeeds only when it strengthens human decision-making rather than obscuring it. Effective teaming frameworks define clear boundaries between automated execution and human authority. The system must present intent, rationale, and predicted outcomes in a form operators can understand quickly, especially under stress. Transparency is not a luxury — it is a prerequisite for operational trust.

The technical challenge is building interaction models that scale with complexity. One operator managing multiple autonomous assets requires interfaces that compress information without hiding critical detail. The autonomy must anticipate operator needs, surface decisions at meaningful moments, and remain interruptible at all times. When designed correctly, the human and machine function as a single adaptive system, combining computational speed with strategic judgment.

Proving autonomy deserves confidence

Quantifying reliability before it reaches the field

Trust cannot be demanded; it must be measured. Autonomous systems require rigorous verification frameworks that evaluate behavior across simulated, synthetic, and real-world conditions. Metrics must capture not only performance but predictability, failure modes, and alignment with mission rules. The objective is to replace anecdotal confidence with quantifiable assurance.

Verification at this scale demands large simulation ecosystems, adversarial testing, and formal analysis methods that probe edge cases humans would never manually enumerate. Operators must be able to understand why the system behaves as it does and what its limits are. By formalizing trust as an engineering deliverable, autonomy transitions from experimental capability to deployable infrastructure.

Applications

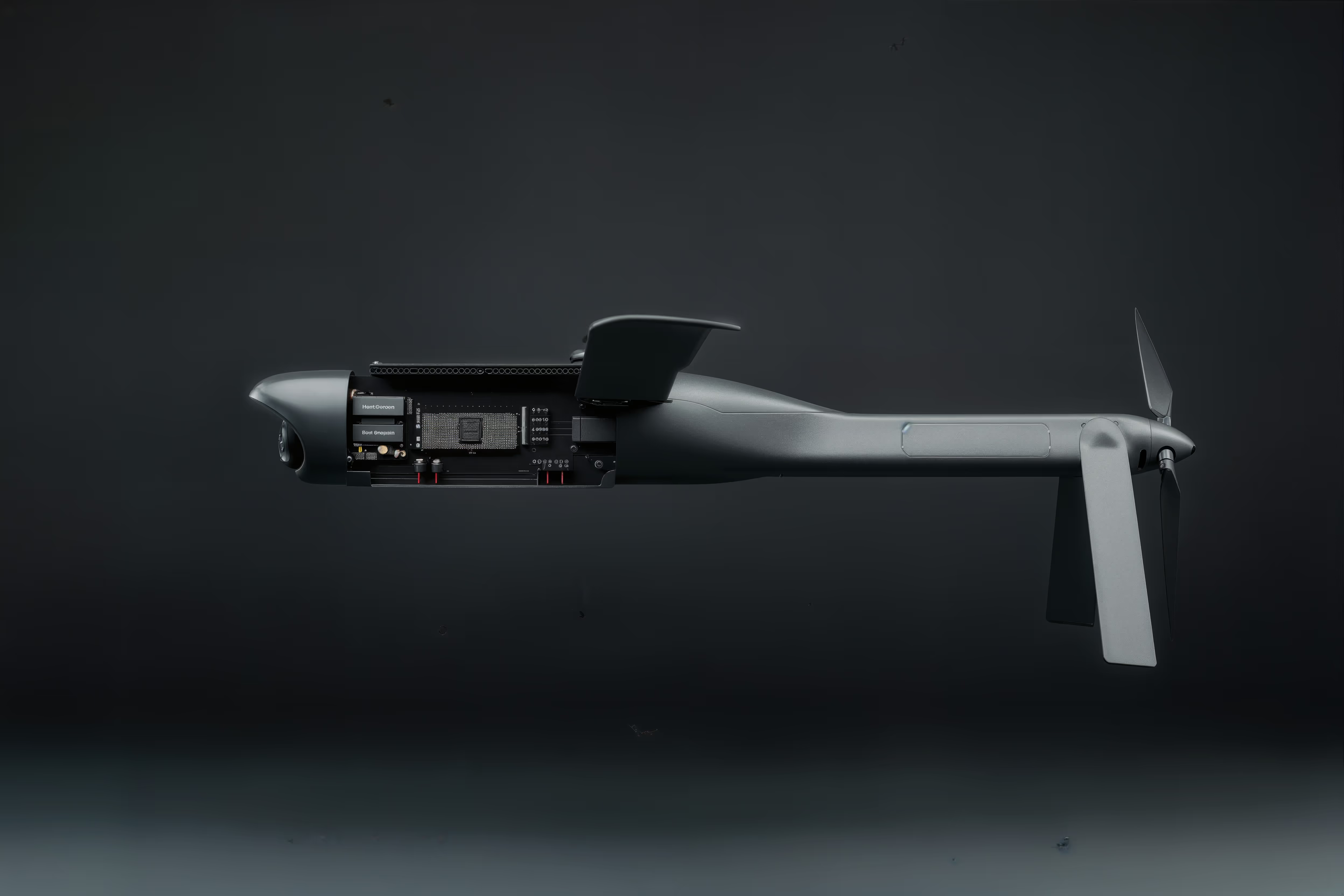

Adaptive Airborne Enterprise (A2E)

Autonomous drone swarms operating 100+ nautical miles offshore to find, fix, track, and target surface vessels. Visual-based navigation enables operations in GPS-jammed environments. Decentralized planning allows swarms to replan dynamically under threat without a continuous datalink.

AI Copilots and Decision Assistants

AI-on-the-loop systems that synthesize information, reduce cognitive load, and provide actionable courses of action to pilots. Demonstrated in simulation and VR studies with quantitative improvements in decision quality. TPS Learjet flight test conducted in September 2025.

Multi-CCA Mission Command

Enabling a single mission commander to orchestrate 20+ collaborative combat aircraft by translating high-level intent into autonomous execution. The system handles routine tasks autonomously and queries the operator only when necessary.

What We're Looking For

The AI Studio doesn’t issue RFPs; instead, we rely on researchers and technologists working on problems in this space who see potential alignment to reach out to us. If you're working on something adjacent to these areas, reach out today!

GPS-denied navigation across diverse flight regimes and conditions

Natural language processing for intent translation

Multi-agent coordination without continuous communications

Resilient autonomy under adversarial actions

Human-machine teaming and trust frameworks

Methods to verify and validate autonomous behavior

Explore other lines of effort

Explore our ThesisFrequently Asked Questions

What makes autonomy “trustworthy” in a defense context?

Trust is the bridge between commander's intent and machine execution. A system is trustworthy when it performs reliably in contested and denied environments – reacting to adversarial interference or environmental obstacles without needing to “phone home” for instructions.

How do you encode “Commander's Intent” into an autonomous system?

We focus on moving away from rigid, scripted behavior. Our research focuses on a system's ability to understand high-level mission objectives (the “what” and “why”) so it can autonomously determine the “how” in real time, even when communication with the operator is severed.

Why is autonomy necessary for “Air Power at Mass”?

Current systems often require multiple people to operate a single vehicle. To achieve mass, we must flip that ratio. Autonomy allows a single operator to command a fleet, preventing operator saturation and ensuring that the human remains a decision-maker at the strategic level, not a remote pilot at the tactical level.

How does the system handle adversarial actions without operator input?

Our focus is on resilient intelligence. We develop algorithms that enable the system to detect degradation in its own sensors or interference from an adversary and adapt its behavior to continue the mission or return safely, all without constant queries to a human controller.

Operators

DAF-Stanford AI Studio exists to solve real operational problems alongside the people facing them. If you are encountering capability gaps at the edge and need autonomy that works under pressure, we are ready to partner with you. Our focus is to turn urgent field needs into deployable systems that directly strengthen the mission.

Academia

DAF-Stanford AI Studio exists to translate frontier research into real operational impact. If you are advancing new methods in autonomy, AI, or simulation and want to see that work tested in high-stakes environments, we are ready to collaborate. Our goal is to move promising ideas from the lab into systems that matter.

Industry

DAF-Stanford AI Studio exists to build deployable autonomy with people who solve hard technical problems. If you are developing AI systems that must perform under real-world constraints — power limits, contested environments, imperfect data — we are ready to work alongside you and turn that capability into operational systems.